10 A relational approach to agentic AI in social science research: Projects, roles, and tasks

Thomas Davidson, Rutgers University-New Brunswick

Abstract:

AI usage statement: I developed the idea for the paper myself and used a voice conversation with ChatGPT to take notes. In the same conversation, I asked ChatGPT some questions about related work and for advice on how I might structure the essay. This helped to refine my idea, although I decided not to follow the structure in my write-up. I drew the diagram by hand and used ChatGPT to generate TikZ code to render it from a photo. This involved a lot of back-and-forth. The essay was written manually in Word with some light editing suggestions from Grammarly. I used Claude Code to provide instructions for converting the paper to Markdown and uploading it to GitHub. I did not enable it to make any other edits to the text, including copying material, but I allowed it to replace parenthetical references with the correct style based on my bib file. The latest paid versions of each system available in March-April 2026 were used. This model of AI usage is closest to the augmented research described above.

10.1 Introduction

Social scientists are increasingly incorporating generative AI into the research process. In my field, sociology, many scholars already use these technologies for tasks such as planning, writing, and data analysis (Alvero et al. 2026). This essay focuses specifically on the use of AI to aid research rather than its methodological applications (Bail 2024; Davidson 2024; Davidson and Karell 2025), although the line between these two uses has admittedly become increasingly blurred. Over the past couple of years, how we interface with generative AI has shifted from back-and-forth copy-and-pasting to dialogue with agentic tools that can access and manipulate files within a computing environment and perform tasks autonomously. Critically, these agentic capabilities can enable AI to perform an increasing number of tasks in the research process that were previously impossible to automate. As agentic AI has grown in both popularity and capabilities over the past year, there has been an influx of interest among academics and much debate on social media and across blogs (Messing and Tucker 2026).

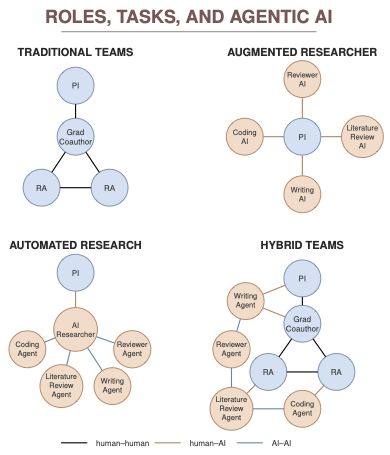

The goal of this note is to develop a framework for thinking through how AI is used in the research processes and the implications of different uses. Specifically, I propose a relational, role-based approach to contextualize the use of these tools. We can think of research projects as involving one or more researchers who take on different roles, each performing distinct tasks, which serve as a useful unit of analysis for approaching AI capabilities vis-à-vis human labor (Brynjolfsson, Chandar, and Chen 2025; Handa et al. 2025; Patwardhan et al. 2025). In what follows, I present four stylized representations of research teams and advocate for a hybrid approach that combines the strengths of humans and AI. The figure below presents examples for each model. Inspired by recent work emphasizing the need to understand the relationships between humans and machines (Tsvetkova et al. 2024), actors are represented as nodes, with color indicating whether they are human or AI, and edges denote relationships, distinguishing between human-human, human-AI, and AI-AI ties. In general, the advances in AI have led to a proliferation of human-AI and AI-AI ties, some of which may supplant human-human ties that have, until recently, been central to the research endeavor.

10.2 Four stylized approaches

10.2.1 Traditional projects

I begin by considering the traditional project, which describes most research in the social sciences prior to the development of generative AI. The top-left figure shows an example in which a principal investigator (PI), perhaps an assistant professor, leads a research team, along with a graduate student co-author and two undergraduate research assistants, who collaborate on a research project. Such a team involves relationships among people occupying different roles, and each person has different tasks. For example, the undergraduate researchers might help conduct a literature review and annotate data; the graduate student might implement analyses and draft parts of the manuscript; and the PI might perform the analysis and write or edit the final draft. While there are many possible configurations of such projects, we can think of the research project as involving the distribution of tasks across people with different roles. It has become increasingly common for social science papers to involve teams like this, although some disciplines still cling to vestiges of a sole-authored model more prevalent in the humanities. Here, I have delimited the boundaries to the primary team, but there are typically other people occupying different roles, such as colleagues who provide feedback on a draft manuscript and peer reviewers who critique the paper, who also contribute to the finished research product.

10.2.2 Augmented research

Artificial intelligence can enable a single researcher to perform many tasks that previously would have been distributed across a research team. AI chatbots can perform tasks like conducting a literature review, annotating data, writing code, and drafting and editing a manuscript. This scenario, in which I have depicted a PI interacting with AI for different tasks, is one many researchers have found themselves in over the past few years. Here, AI can play more narrowly circumscribed roles, assisting with specific tasks. As Mollick (2024) describes, this might entail prompting the AI to adopt a persona (e.g., “You are an expert in survey analysis …”), but it could simply involve a series of task-specific conversations. It is important, however, to distinguish between automation—where a task can be completed without human intervention—and augmentation—where AI can assist but requires human intervention. A report by Anthropic analyzing chat logs provides evidence of a mix of automated and augmented tasks in recent AI use (Handa et al. 2025). In this scenario, the AI-augmented PI is still involved in each task, producing the research by engaging in many back-and-forth conversations with AI chatbots, or perhaps using agents for some tasks.

The delegation of tasks to agents has upsides for the PI. As studies on AI and productivity have shown (Noy and Zhang 2023), AI can speed up the research process. Unlike students—who get tired or are unavailable during midterms or when they need to grade—AI agents are available around the clock. Moreover, such a configuration does not necessarily entail the replacement of human labor, as AI can enable more ambitious research projects for social scientists who may not have the resources to assemble a team like the one described in the previous example. While there is much debate about whether researchers should do this and the extent to which AI can be relied upon to conduct these tasks with sufficient rigor—and I certainly do not mean to endorse such a model—it is now possible for a single researcher to use AI to augment their capabilities and perform many tasks that would have previously required additional human labor.

10.2.3 Automated research

Much of the recent enthusiasm about agentic AI has centered on the automation of the research process. In contrast to the augmented model, where a PI incorporates inputs from different AI systems to produce the product, agentic AI enables a PI to delegate this relation management to AI itself. As shown in the diagram, a PI might provide instructions to an AI agent, which then delegates tasks to specialized sub-agents. Importantly, the research project no longer involves only human-AI interactions but also a series of AI-AI relations. For example, a coding agent might implement, run, and debug analysis code; a writing agent could then draft a manuscript; and a reviewer agent could critique and refine the process.

Until recently, such a workflow was the domain of science fiction, but advances in agentic AI have enabled the automation of much of the research workflow. There has been discussion as to whether a human would even be necessary, as an agentic AI could theoretically develop end-to-end research projects without guidance. As things stand, the capability to manage an agentic workflow in which sets of AI agents can perform research tasks has tremendous potential, but unless we reach a “superintelligence” scenario in which the AI is better than any researcher, I am skeptical that the PI can be replaced entirely. Indeed, most tasks would likely be improved by an augmented approach where an astute researcher uses AI for assistance.

10.2.4 Hybrid human-AI research teams

In contrast to augmented and automated research, in which the roles previously occupied by human participants—graduate students and undergraduate research assistants—are replaced by AI, I expect that a hybrid approach will be the most fruitful way to incorporate AI agents into research projects. In this example, the same core team is present, along with various AI agents. This hybrid system entails the reallocation of tasks across roles, leveraging the strengths of humans and AI. For example, the PI and graduate student might still enlist an AI agent to help write code for the analysis. Coding agents might empower an undergraduate research assistant to be involved in portions of the coding that were previously beyond their expertise. By using an AI agent to conduct portions of the literature review, the research team might free up time for the research assistants to spend more time annotating and validating the data. In this scenario, the researcher can leverage the strengths of agentic AI to tackle certain tasks, while leaving others to the research team. A consequence of this hybrid approach is not only the capacity to reallocate tasks, but also the potential to change roles. The PI might have more time to engage in hands-on data analysis, while the graduate student will be able to take on more of the decisions previously left to the PI.

Hybrid teams have the potential to improve social science research by incorporating the strengths of human and AI participants. Agentic AI is arguably better at coding, debugging, and data checking than the vast majority of social scientists, and has the bandwidth to review more literature and conduct more computational experiments than is practical for most people. At the same time, social scientists possess expert training, including deep domain expertise, understanding of their fields, and a taste that cannot be replicated in silico, and it is important, for pedagogical reasons, to have roles for students and others to complete some tasks themselves, even where automation is practical. In the most optimistic scenario, hybrid teams could enable the PI to direct their energies where most effective and to provide opportunities for others to train, while leveraging the strengths of AI to automated some tasks. This could allow high-quality output to be efficiently produced without compromising on scientific rigor.